21 Finding a Journal’s Impact Factor

I mentioned earlier that this process is one of elimination. In a world where information is plentiful, we can be a bit demanding about what counts as evidence. When it comes to research, one gating expectation can be that published academic research cited for a claim comes from respected peer-reviewed journals.

Consider this journal:

Is it a journal that gives any authority to this article? Or is it just another web-based paper mill?

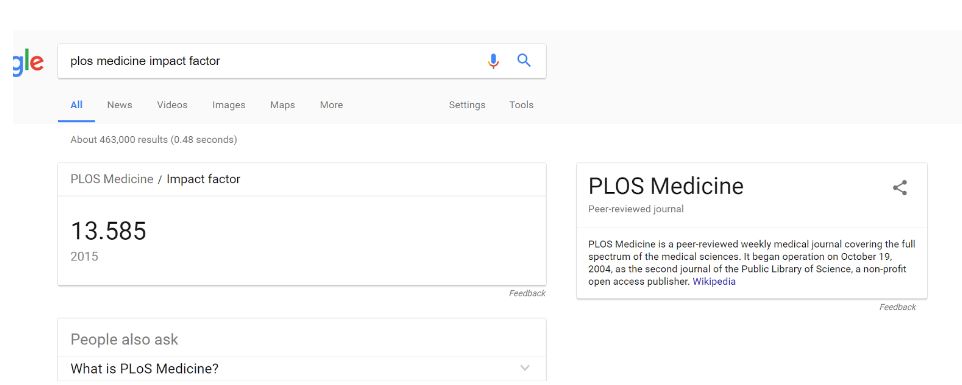

Our first check is to see what the “impact factor” of the journal is. This is a measure of the journal’s influence in the academic community. While a flawed metric for assessing the relative importance of journals, it is a useful tool for quickly identifying journals that are not part of a known circle of academic discourse, or that are not peer-reviewed.

We search Google for PLOS Medicine, and it pulls up a knowledge panel for us with an impact factor.

Impact factor can go into the 30s, but we’re using this as a quick elimination test, not a ranking, so we’re happy with anything over 1. We still have work to do on this article, but it’s worth keeping in the mix.

What about this one?

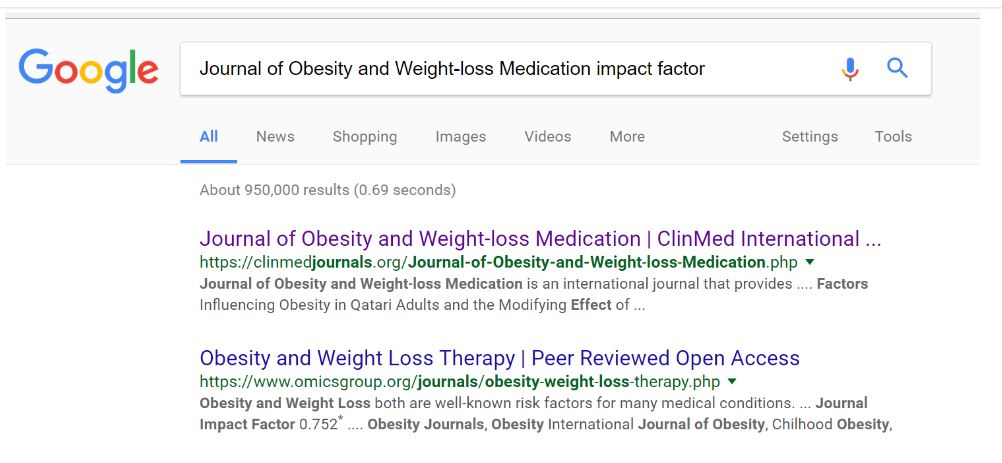

In this case we get a result with a link to this journal at the top, but no panel, as there is no registered impact factor for this journal:

Again, we stress that the article here may be excellent–we don’t know. Likewise, there are occasionally articles published in the most prestigious journals that are pure junk. Be careful in your use of impact factor; a journal with an impact factor of 10 is not necessarily better than a journal with an impact factor of 3, especially if you are dealing with a niche subject.

But in a quick and dirty analysis, we have to say that the PLOS Medicine article is more trustworthy than the Journal of Obesity and Weight-loss Medication article. In fact, if you were deciding whether to reshare a story in your feed and the evidence for the story came from this Obesity journal, I’d skip reposting it entirely.